Is your workforce AI-ready?

Measure AI readiness across your organisation with evidence-based assessments. Identify AI skill gaps, benchmark your team, and make informed decisions about AI training and adoption.

Send your first assessment now with 3 free credits.

Why AISA for AI readiness assessment

Enterprises invested $37B in AI tools in 2025. Over half of employees abandon them entirely. Only 7.5% received meaningful AI training. The gap isn't technology — it's workforce AI readiness.

Sources: BCG AI at Work 2025 · Fast Company · IDC

Conversational AI skills assessment

A 20–40 minute adaptive dialogue — not a multiple-choice AI competency test. The AI interviewer responds to what your employees actually say, probing deeper when it matters.

Evidence-based AI skill gap analysis

Every score is linked to a direct quote from the conversation. No black boxes, no vibes — auditable evidence for every employee AI skills evaluation.

Anti-gaming integrity

Detects AI-generated answers, copy-paste, and style shifts. Your AI readiness assessment results reflect genuine proficiency, not rehearsed responses.

One-click AI upskilling for your team

Turn assessment results into action. Enrol employees into personalised AI coaching on WhatsApp with a single click — each person gets their own AI coach built from their individual results.

Workforce AI maturity assessment

An AI readiness assessment tool and framework for organisations to measure workforce AI readiness and run AI competency assessments for teams.

AI training needs assessment

Identify skill gaps and plan targeted corporate AI training for employees, managers, and business leaders with evidence, not guesswork.

AI talent assessment

Screen candidates for AI adoption readiness during hiring with an evidence-based AI proficiency test for hiring decisions.

AI skills assessment for teams and hiring

Is your team AI-ready?

Run an organisation-wide AI readiness assessment to understand where your workforce stands. Get team-level AI skill gap analysis, identify who needs targeted AI training, and make data-driven upskilling decisions.

- AI readiness and maturity assessment across 5 dimensions

- Team-wide AI skill gap analysis and reporting

- Individual persona profiles for every employee

- Prioritised AI training needs assessment

Is this candidate AI-ready?

Use AISA as a pre-employment AI proficiency test for hiring. Screen candidates for real AI adoption readiness — not self-reported claims on a CV. Every score is tied to evidence from the conversation.

- AI competency assessment before the interview

- Evidence-based AI skills screening

- Compare candidates on the same rubric

- AI talent assessment that goes beyond keywords

How AI readiness assessment works

Apply for an employer account

Submit your application and get approved. 3 free assessment credits included to start.

Invite employees or candidates

Send AI readiness assessment invites directly from your dashboard. Each person gets a unique link.

Get individual, team, and org-level analytics

As assessments complete, your dashboard fills with per-person reports, team intelligence, and organisation-wide AI readiness scores.

Presentable & actionable insights

Board-ready AI readiness reports with clear gap analysis, priority actions, and a 90-day roadmap to reach AI readiness.

Your ready-to-present Employer Dashboard

The AI readiness assessment framework that gives you individual, team, and organisation-level intelligence — all from one assessment.

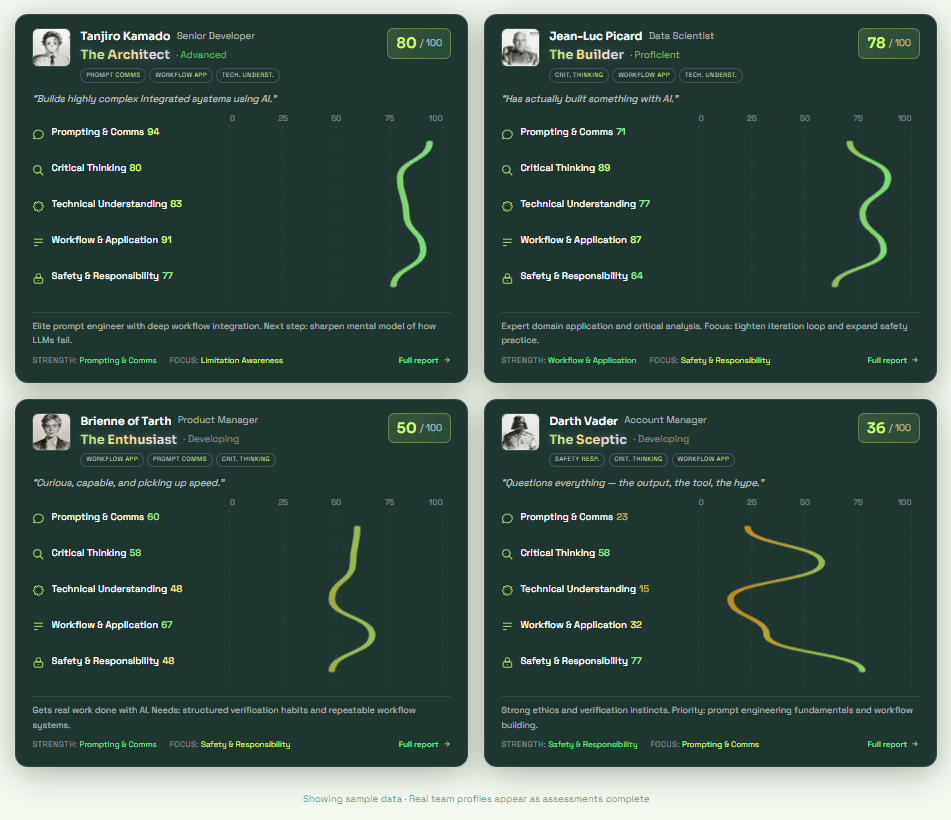

People

Individual AI profiles with persona classification, dimension scores, and personalised growth plans.

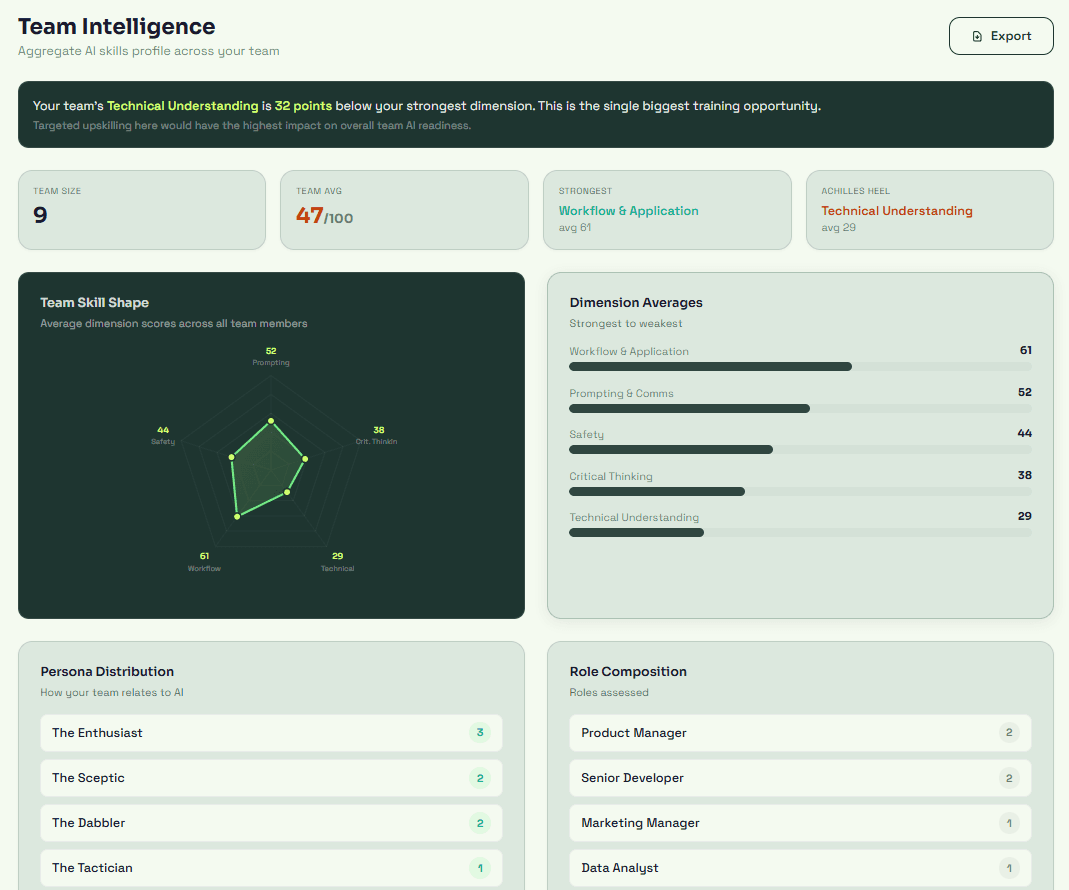

Team Intelligence

Aggregate team view with radar chart, strengths, Achilles heel, and recommended actions.

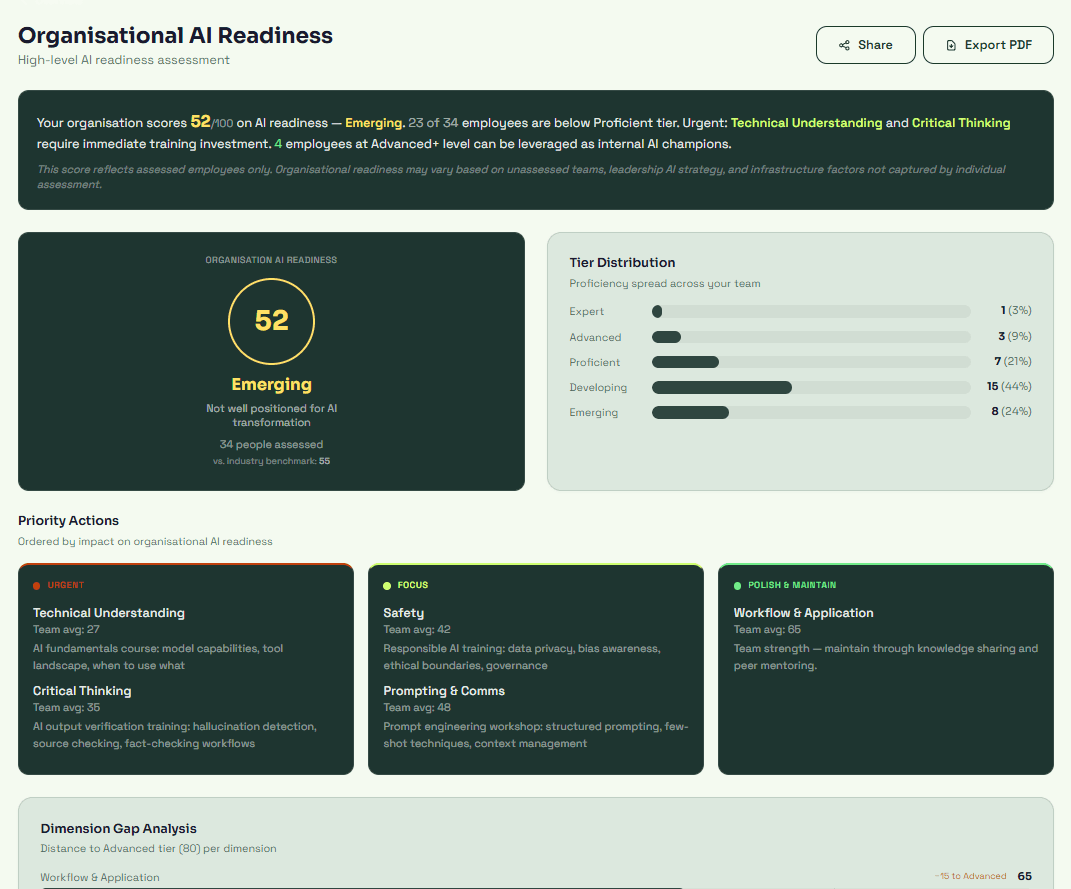

AI Readiness

Organisation-level readiness score, tier distribution, priority actions, and 90-day roadmap.

per assessment · pay as you go

· Zero startup cost

· No subscription

· No per-seat licensing

· 3 free credits included

Get approved, start sending invites in 5 minutes.

Full AI readiness intelligence in 2 days.

Enterprise-ready security

How AISA compares to other AI readiness assessment tools

Built for AI fluency from day one — not bolted onto coding tests.

| What employers need | AISA | Sapia | HackerRank | Codility | TestGorilla | iMocha | HireVue |

|---|---|---|---|---|---|---|---|

| AI skills as core focus | ✓ | ✗ | Add-on | ✗ | ✗ | MCQ | ✗ |

| Individual, team & org analytics | ✓ | ✗ | ✗ | ✗ | ✗ | Partial | ✗ |

| Board-ready AI readiness reports | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Quote-backed evidence for scores | ✓ | ✓ | ✓ | ✓ | Partial | Partial | ✓ |

| Anti-gaming & AI copy-paste detection | ✓ | ✗ | Basic | Basic | ✗ | ✗ | Partial |

| Triple-agent architecture (50+ systems) | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Fully conversational assessment | ✓ | Add-on | ✗ | ✗ | ✗ | Video | Partial |

| Adaptive and role-specific | ✓ | Fixed Qs | ✓ | ✓ | ✗ | ✗ | ✗ |

| LinkedIn-ready AI certificate | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

AI readiness assessment FAQ

What is an AI readiness assessment?

An AI readiness assessment measures how effectively your team can use AI tools in their work. AISA evaluates employees across 11 criteria and 5 dimensions through a 20–40 minute conversation — not a quiz. Every score is tied to evidence from the conversation.

How does AISA measure employee AI skills?

AISA uses a conversational AI assessment where an AI interviewer has a natural dialogue with each employee. A separate evaluation model silently scores their responses against a rubric. This dual-track approach produces evidence-based AI skill evaluations that self-assessment surveys cannot match.

Can I use AISA for AI skills screening during hiring?

Yes. Invite candidates to take the assessment and receive detailed AI proficiency reports before making hiring decisions. Each report includes persona classification, dimension breakdowns, and specific evidence — far more useful than a CV claim of "proficient with AI tools."

What does the AI skill gap analysis include?

Each assessment produces individual dimension scores, persona classification, and a prioritised learning plan. At team level, you get aggregate gap analysis showing where your workforce is strong and where targeted AI training is needed.

Can AISA help with AI training needs assessment?

Absolutely. The assessment identifies exactly where each employee needs AI upskilling — by dimension, by criterion, and by priority level. Use the results to plan corporate AI training that targets real gaps instead of guessing.

How much does it cost to assess a team?

Assessments start at $10 per credit. Volume discounts bring the price down to $4.27 per assessment for larger teams. No subscription required — buy credits as you need them.

Is this suitable for non-technical employees?

Yes. AISA adapts the conversation to each person's role and experience level. Whether you need AI training for managers, business leaders, HR professionals, or developers, AISA applies the same evidence-based rubric with role-appropriate framing.

What is an AI readiness test?

An AI readiness test measures whether your workforce can effectively use AI tools in their roles. Unlike self-assessment surveys or multiple-choice quizzes, AISA's AI readiness test uses a real conversation to gather evidence — scoring each person across 11 criteria and 5 dimensions. The result is an objective, evidence-based readiness score tied to demonstrated proficiency, not self-reported confidence.

What's the difference between an AI readiness assessment and an AI maturity assessment?

An AI maturity assessment typically evaluates organisational processes and infrastructure. An AI readiness assessment focuses on people — can your team actually use AI effectively? AISA measures individual AI readiness through conversation, then aggregates into team and org-level maturity scores.

How do you measure AI skills across employees?

Each employee has a 20-minute conversation with Aisa. A separate AI evaluates every response against 11 criteria across 5 dimensions. The result is an evidence-based employee AI skills assessment — not a self-reported survey. Aggregate the results to measure AI skills across your entire workforce.

For individuals

Get your personal AI skills certificate

Take the free AI skills assessment and prove your AI proficiency with a LinkedIn-verifiable certificate.

Take the free AI skills testAI coaching

Personalised AI training for your team

After assessment, each employee gets a personalised AI coach on WhatsApp — targeting their specific skill gaps.

Learn about AI CoachPrefer to talk first?

Book a call with the founder

Have questions about team rollouts, volume pricing, or how AISA fits your hiring process? Let's talk.

Get in touchThe Science Behind AISA

In 2026, Anthropic published the AI Fluency Index — the largest empirical study of AI fluency to date, analysing 9,830 conversations. AISA covers 93% of the behaviours Anthropic identified as markers of AI fluency and goes even deeper with 4 additional dimensions.Read our white paper: Anthropic's AI Fluency Study & AISA

AISA's framework is developed by a team with deep roots in tech, behavioural science, and AI product leadership — the rubric is informed by backgrounds spanning the Metropolitan Police, Harvard, Crowdbotics (Silicon Valley), and the European School of Economics.